What about join our vvvvser skills and build a proper particle plugins/modules library to get an advanced new way for manage particles in vvvv?

i have a dream… ahahah

no joke, i think we could make giant steps in making particle Systems easier to patch in vvvv.

Here i’ll explane my ideas of how this library could be. please fell free to join the discussion! any idea is welcomed.

If you are interested in this “project”, i highly recommend to read these previous post where this idea comes from:

forum/perfect-plugins-integration-into-vvvv-workflow

forum/flocking-behavior-simulation-plugin

As you could see looking into ParticlesGPU library i’m interested in using GPU for particle systems;

the great point of a particle system in GPU is that you can have huge amount of particles.

the bad point of GPU is that is not so flexible and versatile as the CPU.

this means you will not be easily able to integrate GPU particle system in any vvvv scenario and use it for other purposes than rendering particles on the screen.

instead, using CPU particle system you have a spreads of data and you can use it in any way, controlling any other thing in your patch (wethever you can do with a spread of numbers).

that’s why both CPU particles and GPU particles are interesting.

What to achieve:

- a complex particle simulation is the result of many different functions working together and generating a unique complex behaviour for each particle. The main idea is to build specific plugins/modules that can work together and can be easily combined in order to obtain complex behaviours.

- In order to allow these plugins to work together, we need to decide a system of rules, a PROTOCOL, to keep in mind while writing/patching plugins/modules in order to be sure the new plugin/module will be perfectly integrated with the other features.

Main Ideas:

Shared sources

In order to allow multiple behaviour plugins to work together they need to have access to all the data needed. This means that the all the “feedback” information (i mean the data from the last frame. anybody can tell me a proper term for these data?) in the patch, using holy framedelay nodes to retrieve data of last frame evaluation. an example:

https://vvvv.org/sites/default/files/imagecache/large/images/Complex%20Modular%20Behaviour.png

here the initial current position of particles comes from that framedelay on top and all the plugins on bottom can read these data.

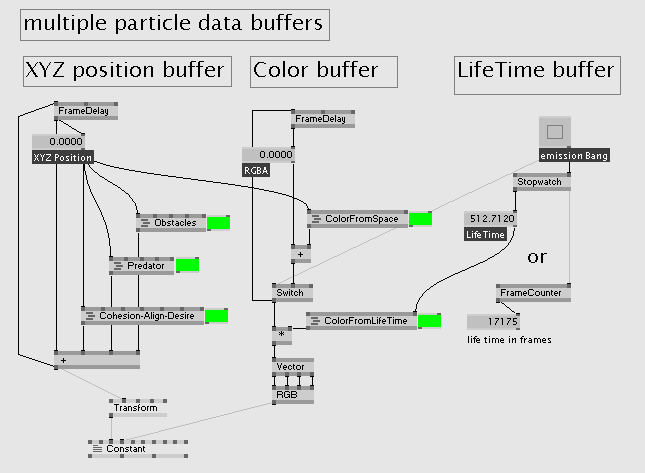

In a complex system we could have more than xyz position data for each particle. there could be also XYZ Velocity, Color, LifeTime, XYZ Scale, … in this case we just need to create extra framedelay loops to store all the data we need.

all the behaviour plugins will retrieve from these cycles just what they need for evaluation.

here i builded separate framedelay buffer but we could combine them in a unique nD vector buffer.

i pointed out in green the plugins/modules of the system; each one retrieve just the needed data.

Plugin Modularity

-

each plugin must be “minimalistic”, in the sense that it does just specific tasks. the can be integrated in the “framework” patch of the particle system, where all the data needed for evaluation are stored.

-

Input/Output of plugins/modules must refer to a standard syntax.

eg. “Position”, “Velocity”, “Scale”, “Color”, “LifeTime”, …

devs must try to use just these common data type in order to increase connectivity.

https://vvvv.org/sites/default/files/imagecache/large/images/data%20type%20syntax%20k.png

as you can see in this example, all the behaviour plugins read the same Position data and output a Velocity data.

i didn’t labeled “XYZ Position” or “XY Position” because the number of dimension it’s just a configuration of our system. Ideally plugins should work in both 2D/3D scenarios.

eg. look at the ForceField plugin in this example: it has a 2D/3D toggle that tells the plugin if we are in 2 or 3 dimensions.

In this particle system there’s just the Position Cycle. In an advanced simulation we probably need also the Velocity Cycle to control accelerated movement. As i showed before, we’ll just add a Velocity Cycle in our patch and all the plugins that require Velocity input will get it from there.

damn… i’ve to go… i’ll continue this post later

in the meantime feel free to comment and share your ideas/critics