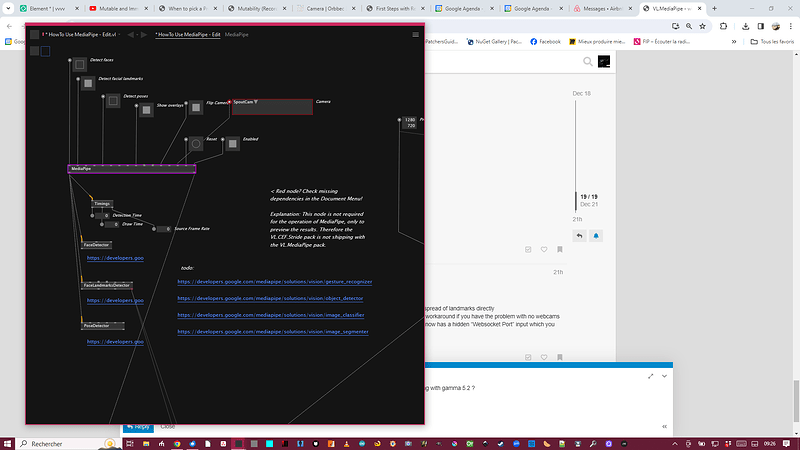

I was curious to learn what this MediaPipe thing is that everyone is talking about and stumbled upon mediapipe-touchdesigner by Dom Scott and Torin Blankensmith. The way they implemented this for TouchDesigner made it possible to use it the same way in vvvv. So full credits to them!

To see what’s possible, watch their intro video:

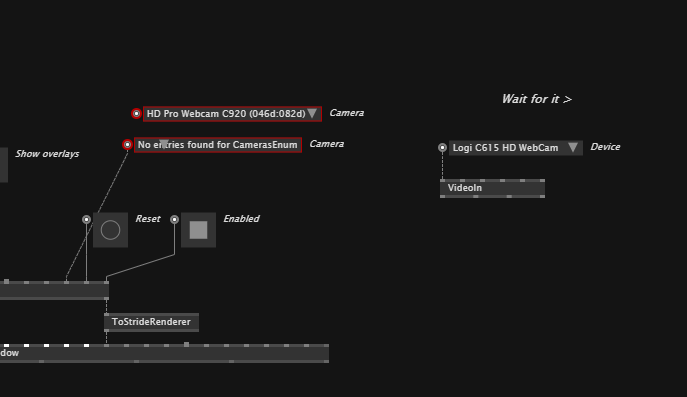

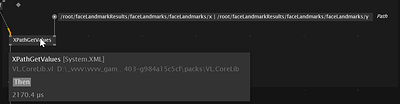

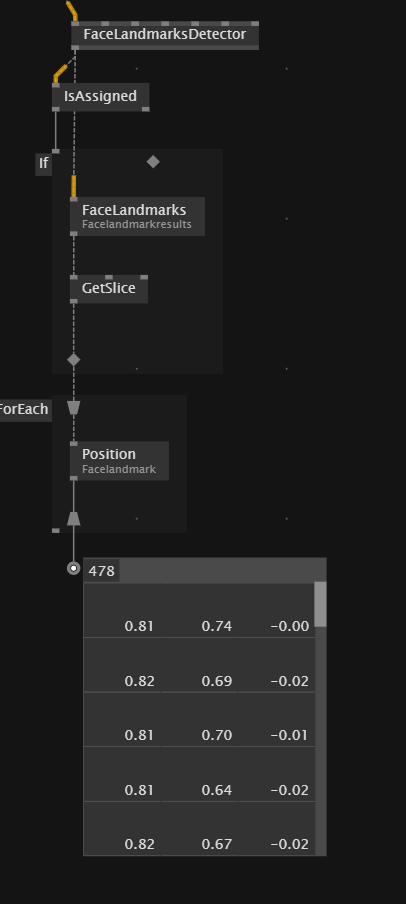

Status: It runs the models and receives their results as json. For Face, FaceLandmark and Pose there is a node to config the models and receive an XElement back holding all the info. For the remaining models such similar nodes need to be built still. Then the big question is how to best return the data (instead of XElement) for each model so it can be most conveniently accessed. Any thoughts anyone?

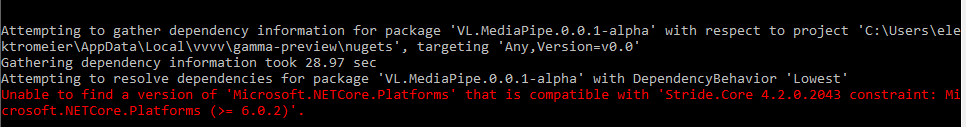

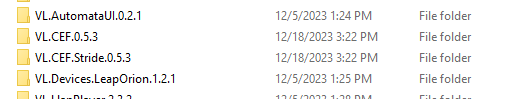

Get the NuGet: VL.MediaPipe