Hello,

I would like to achieve what is called Texture Motion Detection (or Acceleration). Basically is obtained by delaying the texture in time and subtracting it with the texture in the actual frame.

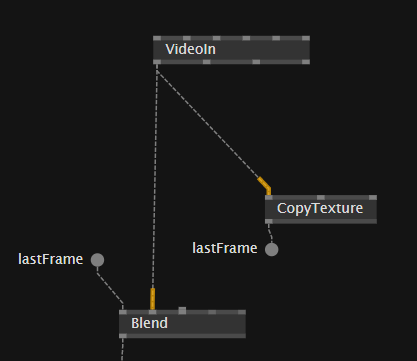

I tried by storing my VideoIn in a pad (like in the “How to Feedback a Texture with a FrameDelay”) but the result is the camera signal just flashing on and off. The patch is like this:

(Blending mode is Difference).

Maybe it is doing like that for dropping frames?

I can’t find an operator that gives control over the delay time of a texture.

Can someone guide me through this?

Thank you so much

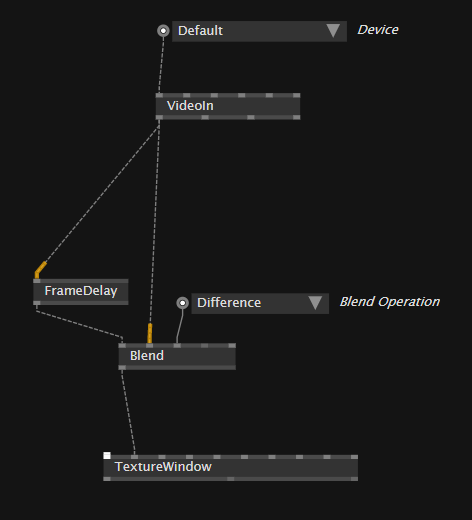

maybe if you try with framedelay instead? would that work for you?

I think this actually called “optical flow”, I think there was implementation, try searching forum

Hi,

for this you need Echo TextureFX, Feedback Compute or RingBuffer (WarpTime).

@nissidis Now it works! I was pretty sure I already tried with FrameDelay… Don’t know why but now it works fine.

Anyway every 4-5 seconds it is still flashing on and off sometimes.

@antokhio Optical flow is for retrieving the direction of the movement, the result is colored in red and green. I just need to see a one channel image with the difference between actual and previous sample, highlighting what’s moving. What’s static will be black.

@lecloneur Thanks but I cannot find any of the operator you listed… Can you tell more about them?

Thanks everyone!

@Szerg Have you had a look at OpenCV’s background subtraction nodes? they sound a bit like what you are after (black is unchanged, white is “new”).

@ravazquez Thanks, I will take a look!

I imagine the principle behind the background subtraction is the image of the background minus me(for instance) in the background, resulting only me.

With the time component (delaying the texture) you can retrieve the motion, aka if something is moving.

If I don’t move, in the case of background subtraction, the background will still be removed.

Anyways I’m looking at CV package with lot of interest!

Hey @Szerg it’s been a while since I used these, but if I remember correctly all BG Substractors work by using a set of initial images to determine a static BG image (Initialization frames), and will then compare the “current” image to that and return a mask with black for what is the same (using threshold) as the reference image and white for what is different (again using threshold).

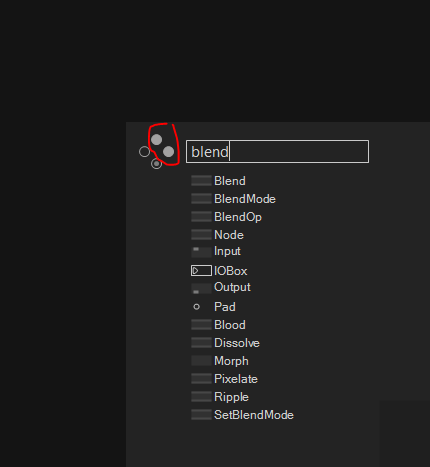

@Szerg you need to be sure to add Stride.VL runtime component to the project, after that be sure to have this enabled

This topic was automatically closed 365 days after the last reply. New replies are no longer allowed.