vvvv could solve problems!

The process of problem solving is very tightly bounded to restructuring of cognitive schemas. Restructuring means the connections between schemas are dissolved and new connections are established.

vvvv nodes are exactly like schemas. That’s why vvvv is so easy to understand for non-programmers. (node = cognitive schema)

When the output of your patch is not what you expected, you restructure: you try to link them in a different pattern, or insert new nodes.

We are very close to make vvvv ABLE TO SOLVE PROBLEMS!

A new vvvv feature is needed that resets and rebuilds in a new way the connections of the nodes. Repeatedly. Randomly. Until the desired result is reached. See [http://en.wikipedia.org/wiki/Trial_and_error](trial-error learning )

Example: Let’s say that I search for the linear equation’s (ax+b=y) solution. I know how to calculate y from a,b and x, but what is the situation if I look for x. I know a,b and y.

There is one more information available, that the relation between a,b, and y comes from the 4 basic operations: +,-,*,/.

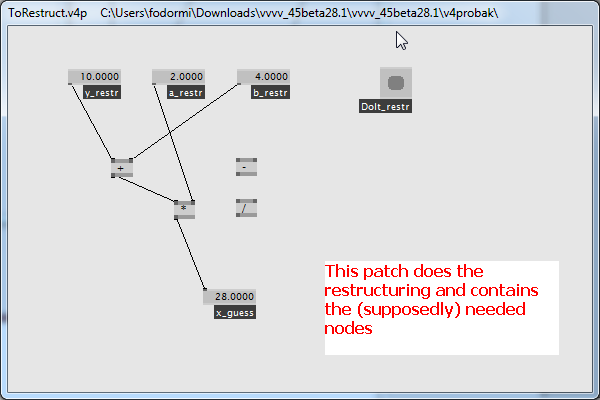

I made a (sub-)patch containing the needed nodes (+,-,*,/), and start to try to link them so, that the output of this sub-patch should be equal to x.

I give them the initial values a=2, b=4, y=10 and I start restructuring the links, expecting that the sub-patch should give x=3

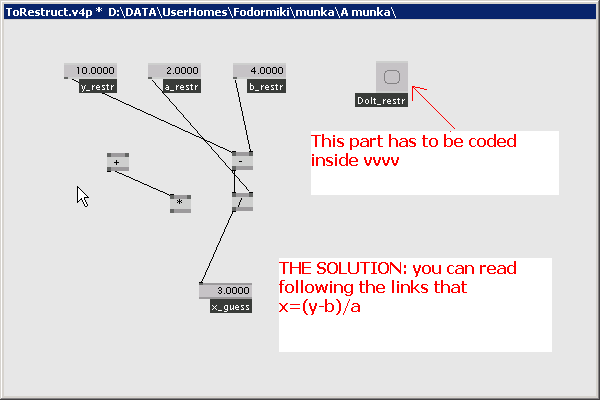

As a double-check, when I get the same output as x, I try with different numbers. a=3, b=7, x=5, y=22

Video presentation of the theory: http://www.youtube.com/watch?v=yFwHiJyUpfE

Suggested solution:

-

a table is needed which contains the links (incl. source and destination pin id). I have found these information in the xml file, but changing them does not result changes in the patch’s behavior: you have to save it first and maybe reload it again…

-

a feature should be implemented that checks which pins can be connected with which. This might be a filter of the above mentioned table, in order to not connect things that have different datatype.

-

and the last thing that should be created is a “testing routine”, that checks whether the result is what we expect. Maybe it feeds the whole patch with different inputs (e.g. spread) to test whether the output is according to the expected output spread. It continues restructuring until the desired internal structure of the patch has emerged.

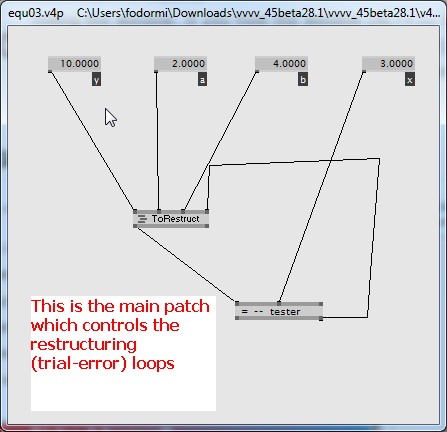

The main patch managing the search (contains the trial values (a,b,y) and the expected output (x)) (4.3 kB)

Example for a randomly created links that deliver wrong output (4.3 kB)

The solution: after trail cycles finally the patch’s internal structure arise so, that the output is what we expected (4.7 kB)

Finally the tester doesn’t give a command for further restructuring, as the expected value equals to the output (4.3 kB)