Hi Martin

thanks for great contribution

how to use/convert video texture instead of mesh as an input for particle positions?

http://unitzeroone.com/labs/particleVideo/

hi,

use a control texture for your shader. you can encode xyz(w) positions into the rgb(a) channels. an example: displacement-map

check the unc´s contribution, maybe it´s usefull for you.

sites/default/files/user-files/Metallica_2.zip

hi,

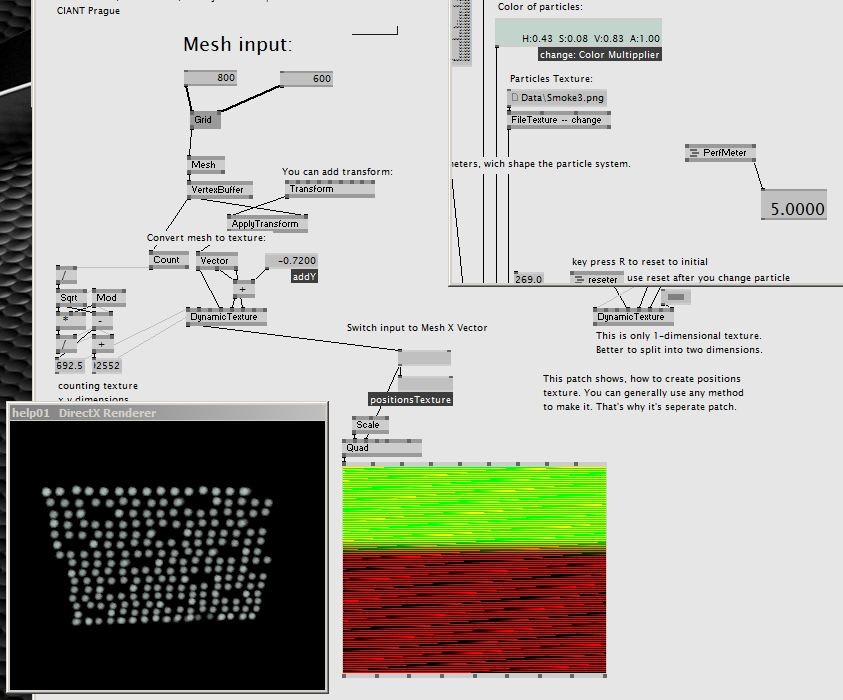

there is a subpatch “CiantCreatePositionsTexture”:

i am looking for the way to replace “Mesh to Dynamic Texture” with

“Video Texture to Dynamic Texture”

if I am starting with Grid 800x600, but get 5fps… i dont think its the right way.

@dEp

how can i get xyz(w) positions from video texture?

@lasal

Metallica - i see just advanced Emboss shader there, but not more…

how to produce particle with that?

Hey,

maybe metallica is more usefull with a 2d particle field, check the dotore´s example.

ciao

So for the effect in the flash example you linked to you are going to want to have the spawn of your particles be random, but attract to a certain pixel on the screen based on it’s luminance. You will have to do more than modify the spawn position texture. You will have to add an attractor field texture into your particle system, and input the luminance of your video into that.

J

Hi,

DiMix, I Didn’t know about this forum. I’m quite new to v4 comunity :) Thanks for informing me at the contribution thread.

The problem in your method is that you create a very big mesh each frame. That’s why it’s so slow, I guess. Try to put it on the right side, instead of a Mesh input.

Take the video texture and make a texture of gradient from it. It means, on each texel there should be a direction in rgb. You will probably need to look in the texture four times for each pixel. (see what’s up, down, left, right and make the direction) This texture will be the force.

For source texture, in a shader and put value of red as particle X position and blue as Y position. This will make a plane. Combine it and you’ve got the effect.

I will release a combination of CiantParticles and Kinect very soon. There will be something near to this effect that you want.

Enjoy your holidays!

Martin.

Hi Martin,

thanks for reply and welcome to forum.

for the moment I found only one way to convert VideoTexture to Gradient (PositionTexture or ForceTexture) VideoTexture > (spreaded) Pipet - EX9.Texture Simple > RGB - ColorSplit

but Pipet is also very slow. (for me 256x256 = 10fps)

Is there another way to extract data from a texture to get RGB values?

here are some threads about:

which shader you particularly mean?

Well, I meant your newly specially created shader to access the data :) My guess is you have to do this on a graphic card, but I’m not sure of what v4 can do.

Take texture and a geometry->grid and send it to a new shader (template->effect). In a vertexShader double XY size and in pixelShader you can access a texture that you made from the video. Rendered layer convert to texture and send to CiantParticleLoop as a new force.

I hope to release the Kinect part today, then you will see.