I would love to get back to doing some live visuals vith vvvv, but I feel super demotivated by the lack of convinient pipeline for rigs. In modern asset workflow and creation, rigs are truly everywhere, and it always have been one of the parts in vvvv pipeline that did not get a lot of love imo.

There are few threads around here:

they sadly ended with very little info, and with extremenly hard process to work with

also there is a channel on element for skeletons:

What I would love to do with this thread is just get some experiences from other users, their expectation, workaround, maybe some info on future plans from devs, and just collect some general heading what could we perhaps one day have in stride/gamma. I do understand there are more pressing issues and lot of work to do, this is just shout out to this topic, as we are all skeletons inside, so we should give skeletons some love.

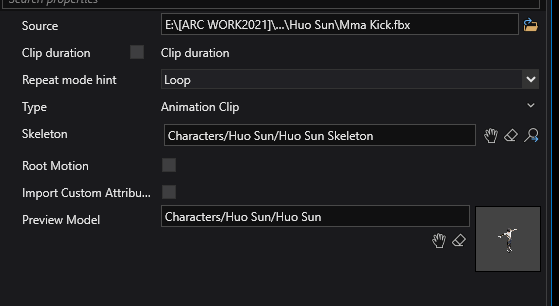

What are the biggest hurdles to this effort? For example the problem with importing animations that have to be in different files and only files up to certain version of blender are good for this is quite a big hurdle.

Maybe, if there is enough interest in this topic and people that have experience with it, we might be able to kickstart project that would help us achieve good skeleton implementation, and everybody can pitch in some funds.

So how should good skeleton implementation look?

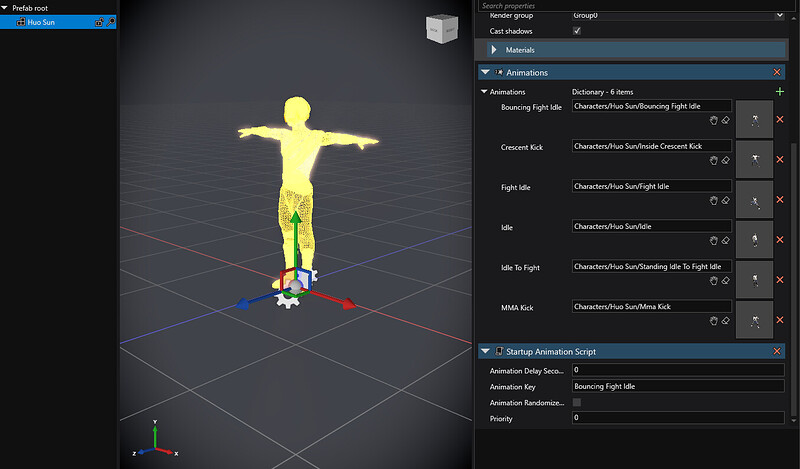

In my opinion, on one hand it should be general system to load skeleton models, preferably working well with blender since we like to keep things in the realm of easy acces. We should could have tools to easy patch some state machine for the animations, once in gamma.

On the other hand, we need system to customize skeletons - It would be great to be able opening stride editor, and preparing structure of the rig, attach rigidbodies, change their sizes, and some basic settings. There should be a way how to easily create IK chains to make wierd stuff, scopions, alien shapes with many appandages, etc… But it does not have to be done in stride editor, maybe we would be able to come up with rigging structre just directly in gamma, since its just graph structure in scene.

All of this should be made to work with physics engine to levarege the most out of IK.

Blender and Unity are system I am familiar with, and especially unity has some kickass scripts that are similiar to Blender bone constraints that are super nice to work with. You have option to create IK chains very easily, and it boosts creation of content signifcantly. Perhaps we could do blender addon that would allow us to export some additional data with skeletons, that would allow blender to be a sort of editor for the skeleton prepared to be used in gamma.

Check out unity implementation here:

https://docs.unity3d.com/Packages/com.unity.animation.rigging@1.1/manual/RiggingWorkflow.html

and to see it in action with live visuals, this is what the system allowed me to do: