Has anyone successfully compressed and streamed any of the Kinect2’s image streams? Particularly after the depth image. Ideally would have rgb too, but not sure if that’s feasible.

for the streaming part there’s

and

this one is based on node.js

for the compression part just found @vux already made it (sharpdx)

Have you used the room alive toolkit?

If so do you know what it can stream over the network (rgb/depth/skeleton) and how the streams are available on the client side?

just tested the calibration part on a single machine

but you can stream depth and color with the kinectServer.exe…

Thanks, will take a look. Still curious to hear from anyone who has done this already…

The next dx11.pointcloud release will include nodes that allow streaming (kinect1/2) pointclouds via network! ;)

oooh!

sockets?

Someone is having a look at the roomalive toolikt for me btw. Looks promising

with the help of a very cool zeromq implementation by velcrome.

i am testing the router-dealer pattern at the moment so that several clients can send their pointclouds to a single server on a single port.

Hi,

I’m researching these days different possibilites of mixing various kinect 2 devices.

Looking foward for dx11.pointcloud streaming pointclouds. ¿Is it already available?

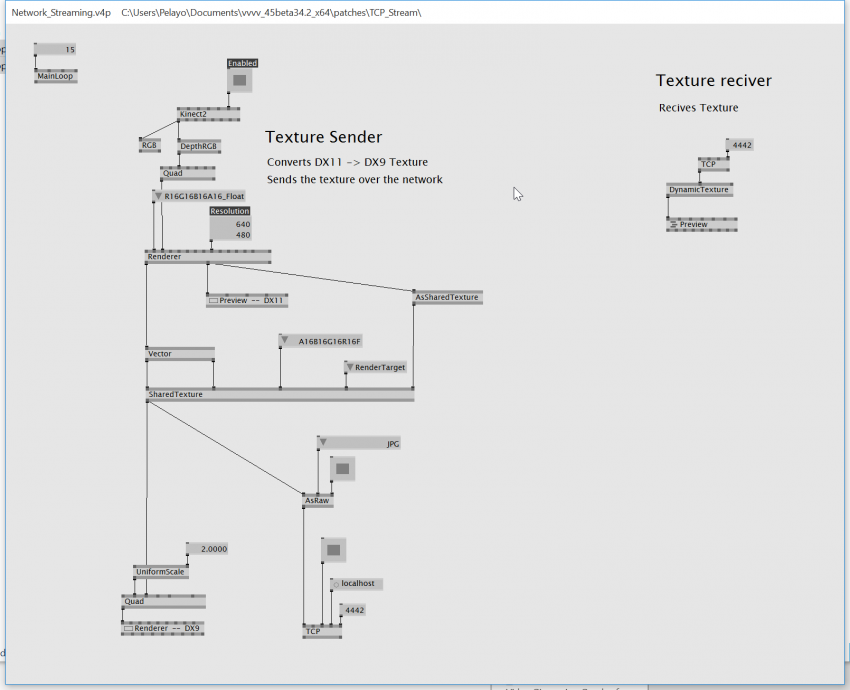

This is how I’m sending Kinect 2 images through the network after researching a bit in the forum.

Hope it helps someone.

Hey I’m curious if anyone got this to work in the meantime?

I got the depthRGB streaming but not the kinect2 Depth or pointcloud data

checkout the latest version of dx11-pointcloud here:

and have a look at the client and server nodes. unfortunately I had not enough time to test them in detail, yet. but they could be a good start for you :)

Oke thanks I tested them… there seems to be something wrong with the AsRaw(DX11.Pointcloud Kinect) maybe it needs another kind of pointcloudbuffer in there…?

I made a workaround with the AsRawAdvanced and FromRawAdvanced and it works with 2 fps now

Hi,

I tried the patches dx11-pointcloud-master\research but it seems that AsRaw nodes don’t do the Job.

Did I left something?

I am looking for a way to record Pointclouds from Kinect in VVVV.

Aurelien

Hi @Aurel,

Kinect gives you the depth map, which is already a representation of a pointcloud. You just need to record it a play it back. You may store the depth DDS files in R16 format.

Make sure to store the Raytable of the device as well, each Kinect One device is slightly different, when using generic formula to interpret depth as XYZ positions or Raytable from a different device, the positions in pointcoloud may differ by dozen of centimeters. As and alternative, you may want to store World texture, which already contains correct XYZ coordinates, but that makes you save 8x more data (R16 v R32G32B32A32)

Safe,versatile but disk space consuming method is to record the Kinect stream with Kinect Studio. 1m is about 6GB. Use the version from Kinect 2.0 SDK or compile recent one from https://github.com/angelaHillier/Kinect-Studio-Sample

There are also nodes to do this https://vvvv.org/contribution/microsoft-kinect-2.0-tools