Cool thoughts, was also thinking already to wrap some common shader functions in a simple modular framework. But i think its another topic since tonfim probably asks for something similar that can be called with one node, or?

I think the long-term approach is definitely a modular shader that can be constructed in a node graph. Any non-trivial material will need more than what one uber-shader could realistically provide. Any VFX person is used to this workflow as well - parameter-driven shaders are, like, so 2010

Tangentially on CUDA:

I recommend managedCUDA, which lets you write bare-metal C kernels but compile them within C# and is available on NuGet. I have a working vvvv node which will be using CUDA for raytracing and it seems to work great.

to draw a quick resumee without going into details:

- pro vvvv users would apparently sacrifice simplicity for flexibility and quality.

- metal/roughness is the preferred workflow

- substance designer defines some kind of standard

however, the first goal should be a node that you put into a patch, connect geometry and renderer and that’s it. This node could of course be a module that internally uses a shader and default textures/cubemap…

inputs would ideally be same as Phong, but only one color input with additional metalness and roughness inputs. also only one light for a start…

any objections?

My only suggestion is to sanely normalize values such as roughness so that you get a nice linear transition. I’ve seen some nice reference charts which are handy for artists.

If you leave the values raw you end up getting a lot of change in very small value increments which is a bit counter-intuitive.

Also I’d be excited to help develop this. Email me at polyrhythm@gmail.com if you’re looking for contributors

any progress here ? tmp’s multipointlight sets an example of simplicity. so i agree with tonfilm on the workflow, create node, connect geometry…done.

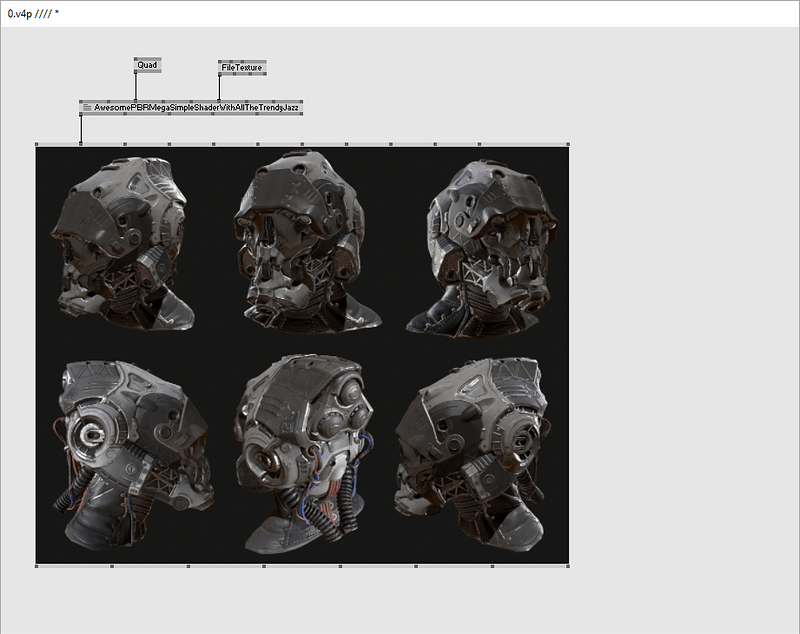

something like this?

/sarcasm

I’m pretty much getting along with Disney, I’ll just need to do some handholding help patches and voila you can have more or less the above picture with only a single shader node and its accompanying PreprocessorOptions node.

@microdee as long as the inside of the module is just having a shader and filetextures and not eating CPU cycles like crazy, yes

I’m working on an updated version of SuperPhysical which hides away most of the complexity and logic in VL modules and custom VL datatypes. for example a material datatype which joins all the settings and textures. I think at least for this contribution it is the best way to have both a complex shader and a simple setup.

@mburk SuperPhysical FTW! Could you also please try to include a way to work better with instancing lots of geometry, especially using the subsets of a Geometry and being able to access and instance those with a transformation buffer. SuperPhysical is so nice already, but just when it comes to rendering particles and/or instanced geometry that it comes to its limits from what we have tried so far.

Otherwise I think SuperPhysical is pretty close to a perfect shader for easy rendering, although I guess Shadows are still not perfect and AO would be nice to be solved at the shader stage, since it adds a lot of realism and the SSAO TextureFX isn’t that great.

Oh, and the aformentioned saturation shift when white light hits a shiny colorful surface should be solved somehow.

@mburk If we can support the development of a VL based material workflow and shader configuration in any way, we’re very happy to do that.

I mean testing, verifying against other usecases, but also financial resources to make some dedicated development time free for that.

microdees FBX4V pack has an interesting way of dealing with geometry with many subsets. It unites them into one piece of geometry and allows to address them still in the shader for transformation and material through buffers, which makes it much more efficient than instantiating the shader for every piece of geometry.

I also had a look at microdee’s latest Disney implementation which isn’t up on github yet, might be worth waiting for this as well. The setup of the scene seems pretty clean.

@eno I recall woei implemented those papers for you guys as well, wasn’t that anywhere close to a stripped down PBR Shader?

@readme the scope of that was too big for the time we had during this project, so we made do with ‘traditional’ methods.

Hello everyone,

I just uploaded the latest version of my SuperPhysical development. The material and light setup are completely new. Everything is modularized into custom VL datatypes and operations. This allows you to just connect the features you need and have a very clean setup.

I guess this is my answer to a simple standard shader, since now you don’t have a lot of overhead anymore, if you just want a simple setup.

The VL part is still a work in progress, if anyone has suggestions, please don’t hesitate.

I’m also using the new posibility to upload data from VL to a shader, which works out very nicely.

Integrating glTF would be a nice next step, that should easily be done, as soon as there is a DX11 wrapper.

Check out the help patch for an example setup. The contribution is not heavily tested, so any feedback is welcome.

What is still missing is instancing, but for now I suggest to merge instanced geometry, before feeding it into the shader.

@eno would be glad to get some feedback regarding the usability of the new system.

@seltzdesign there are a lot of fixes. I find the lighting is working now as I would expect it. also feedback welcome. AO should probably still be a post-effect. I’m planning to implement a nice new algorithm and will share this if it is ready.

Nice to see @mburk

Did you also have a look at https://github.com/KhronosGroup/glTF-WebGL-PBR?

It uses a lot of preprocessor defines.

Also Microdees Disney implementation does it.

This brought me to the idea of having a shader input that takes such preprocessor defines.

This way we could dynamically adapt the shading depending on what the attributes of the mesh and the textures are providing.

I am adding this preprocessor node to vvvv.js in the attempt to get glTF working there nicely.

Or does this somehow exist already in DX11?

Yes, I thought of that and also already implemented it as a proof of concept. It’s quite easy to chain up defines from the VL modules, but it had no meaningful performance gain since I already have conditional evaluation of parts of the shader. Although this kind of branching is considered pure evil by some, using defines instead did nothing to improve performance, so I layed this off for now. Still worth investigating further, though.

Ah interesting!

But how can you bring the defines into the shader node actually?

I think the performance issues can be hard to meassure because modern gpus optimize the code under the hood and have less problems with branching because of this (Thats what I read on stackoverflow at least).

It might be noticable in some scenarios where the optimization does not apply.

Yeah, might be true. In vvvv there is a hidden “defines” pin on DX11 shader nodes.

@mburk: Your new SuperPhysical 2.0 leaves me simply speechless. Just WOW!!!

I tried to marry it with DOF&SSAO contribution from @Anthokio, which I love for its simplicity,

only needing depth and projection transform.

Sorry if I mix things up a bit here, but I guess DOF (and maybe SSAO as well if one want to make things uberphysical) could be very useful in conjunction with SuperPhysical, and something like this might be part of “The New vvvv Standard Shader” as well. Or be somewhere around, who knows.

Now I had to find out that Anthokio´s shaders don´t behave as I know them in latest Alpha (35.15).

Here I added both shaders into your help patch as post effects to show the problem.

(file needs to be unpacked to \packs\SuperPhysical\nodes\modules and will leave you with two .tfx in the totally wrong place… just delete everything afterwards…)

Maybe this is just something about scaling in depth channel and can be resolved easily?

I would really love to pull a focus on super physical objects…

SuperPhysical help_DOF_SSAO.zip (11.1 KB)

edit: Looks like I had weird settings on those shaders, after building it again from scratch it worked.

look at a DX11 layer node, open inspector and see for yourself ;)

also there’s a PreprocessorOptions node in mp.essentials which creates pins automatically for annotated defines and if defined(…) 's. See its proper and informative help patch for more info.

I have to check out new SuperPhysical.

I’m also in the middle of a huge refactoring since late November both in my C# and my HLSL systems. End result might allow me to create a feasible deferred rendering system again which might actually work this time buuut I don’t have any ETA while I also have to do more intermediate projects :(